Build novel AI solutions on your data

Combine proprietary data with human expertise to create AI systems competitors can’t replicate off-the-shelf.

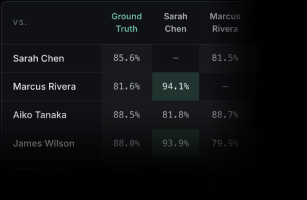

Know before you deploy with model benchmarks

Score outputs across model versions, compare tradeoffs between frontier models, and predict real-world performance on the tasks your users actually care about.

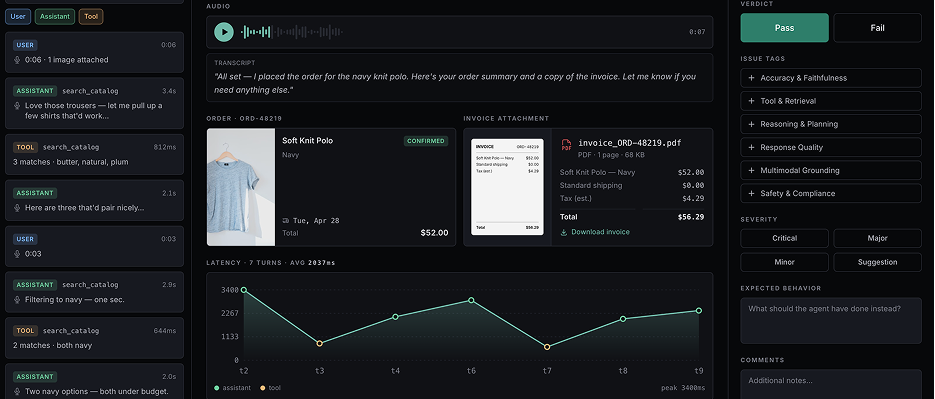

Continually improve AI from real-world usage

Most teams collect evaluation traces but never act on them. Label Studio turns edge cases into labeled training data so models keep getting better after launch.